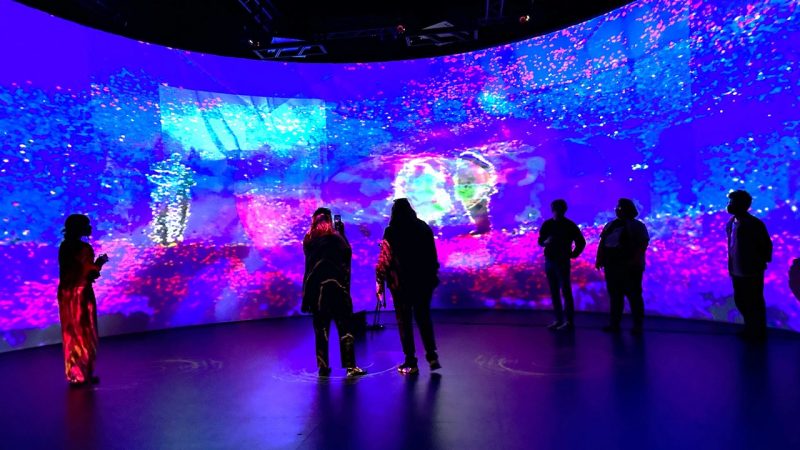

The Cube

at Virginia Tech

We host a collaborative research environment called the Cube – a completely reconfigurable immersive environment unlike anywhere else. It hosts research ranging from embodied data analytics to drone research to cutting-edge creative work.

To accomplish this, the Cube houses a motion capture system that can capture human performance (and some non-human performance) across the entire space, one of the largest immersive audio systems in the world, and a new projection system that enables projection mapping on all walls.

Unique in the world, the Cube is a five-story-high, state-of-the-art theatre and high tech laboratory that serves multiple platforms of creative practice by faculty, students, and national and international guest artists and researchers.

The Cube is a highly adaptable space for research and experimentation in big data exploration, immersive environments, intimate performances, audio and visual installations, and experiential investigations of all types. This facility is shared between ICAT and the Center for the Arts at Virginia Tech.

The Cube is one of the very few places of this kind worldwide. It is 42 feet high (five stories), with an interior stage space of 48 feet long, and 40 feet wide. The Cube has the features of a black box theater, similar to a soundstage, opening up possibilities to make almost anything technological or artistic happen therein, from musical performances to robot ensembles to XR simulations to art exhibitions.

Currently, the Cube is equipped with three modular 4K projectors (with two of them packing 10,000 lumens each and the other one capable of going up to 30,000 lumens), one disguise vx4 (multimedia presentation server and projection mapping system with four 4K UHD outputs), 134.6 channels of 3D spatial audio, nine Holosonic directional loudspeakers, sixteen channels of wireless headphone transmitters, a 16-camera Qualisys Optical Tracking System, a triptych immersive projection screen, four channels of Pozyx Ultra-Wide Band (UWB) Radio Frequency Tracking, and nineteen miles of analog and digital audio, video, and data patchable connections. This patching system connects the entire Center for the Arts and has a private 10G fiber link to the Andrews Information Systems Building, a secure data center housing additional computing and storage. The technical aspects of the Cube allow for innovative projects to be completed and experienced therein, and a few of them are worth mentioning.

-

Article Item

Embodied Virtual Reality for Training and Performance , article

Embodied Virtual Reality for Training and Performance , articleA project striving to conduct a feasibility study for a fully immersive virtual environment focused on training American football quarterbacks. The study seeks to create a flexible platform that integrates proprioceptive, kinesthetic, physiological, visual, and auditory elements to enhance athletic training and measure psychomotor responses.

-

General Item

Liminal Spaces

Liminal SpacesNovember 2-6, 2022

The first electroacoustic musical composition (to our knowledge) to make use of a layered immersive spatial audio system integrating bone conduction headphones, the Tesseract, and the Cube. Audience feedback and our own observations indicate rich and unique spatial experiences, radically different from binaural sound or periphonic HDLA soundfields alone, resulting in a compelling new spatial infrastructure for composing immersive music. This layered system has subsequently been used for human factors students to explore and innovate using a system that no other students have had a chance to experience.